Crop Recommendation

Random Forest · 22 crops · 2,200 samples

Predicts optimal crop from soil nutrients (N, P, K), pH, temperature, humidity, and rainfall. Returns top-3 recommendations with calibrated confidence scores.

We believe ML models should be transparent about what they can and cannot do. That means validating datasets before training, showing our work, and being honest when things don't work. This page documents our ML research — including our failures.

Our Philosophy

A sophisticated algorithm trained on bad data produces confident nonsense. Before training any model, we run rigorous statistical tests to verify that real, scientifically meaningful relationships exist in the data.

If someone flipped a coin to assign fertilizer labels, no algorithm — no matter how sophisticated — can recover the "true" relationship because there is no true relationship to recover. Data cleaning fixes corrupted signal; it cannot create signal from nothing. This is why we validate every dataset before training.

MI = 0 means features tell us nothing about the target. MI > 0.1 indicates some predictive relationship. MI > 0.5 indicates strong signal.

|r| < 0.1 means negligible relationship. |r| 0.3-0.5 indicates moderate relationship. |r| > 0.5 indicates strong linear correlation.

p > 0.05 means we cannot reject independence — features and target may be unrelated. p < 0.001 provides strong evidence of real dependence.

Classification: Accuracy must exceed 1/num_classes. Regression: R² > 0 means features explain some variance. This is the ultimate "proof in the pudding" test.

Case Study: Failure

Transparency means showing failures, not just successes. Here's a dataset we initially planned to use — and why our validation process caught it before it could harm farmers.

This popular Kaggle dataset contains 100,000 observations with soil properties (N, P, K, pH), climate data (temperature, humidity), and 7 fertilizer classes. At first glance, it seemed perfect for training a fertilizer recommendation model. Our validation revealed the truth: the fertilizer labels appear to be randomly assigned with no relationship to the features.

If fertilizer recommendations were based on soil conditions, we'd expect different fertilizers to be recommended for different soil types. Instead, every fertilizer appears with almost exactly 14.3% frequency (1/7 = random) regardless of soil nitrogen, pH, or any other feature.

Production Models

After rigorous validation, these models passed our signal tests and provide real predictive value. Each model includes confidence scores, rationale, and links to open research notebooks.

Random Forest · 22 crops · 2,200 samples

Predicts optimal crop from soil nutrients (N, P, K), pH, temperature, humidity, and rainfall. Returns top-3 recommendations with calibrated confidence scores.

XGBoost · Continuous kg/ha · 12,081 samples

Estimates maize yield based on fertilizer inputs (N, P, K), soil properties, and climate. Trained on Harvard Dataverse Sub-Saharan Africa trials with real agronomic relationships.

Yield Model + Grid Search

Uses the yield prediction model to find optimal N-P-K rates for target yield or budget. Includes economic analysis (cost, revenue, profit) and regional fertilizer product mapping.

Research Evidence

These charts are produced directly from our research notebooks and represent the actual behaviour of our production models. We publish them to be transparent about what our models have and have not learned.

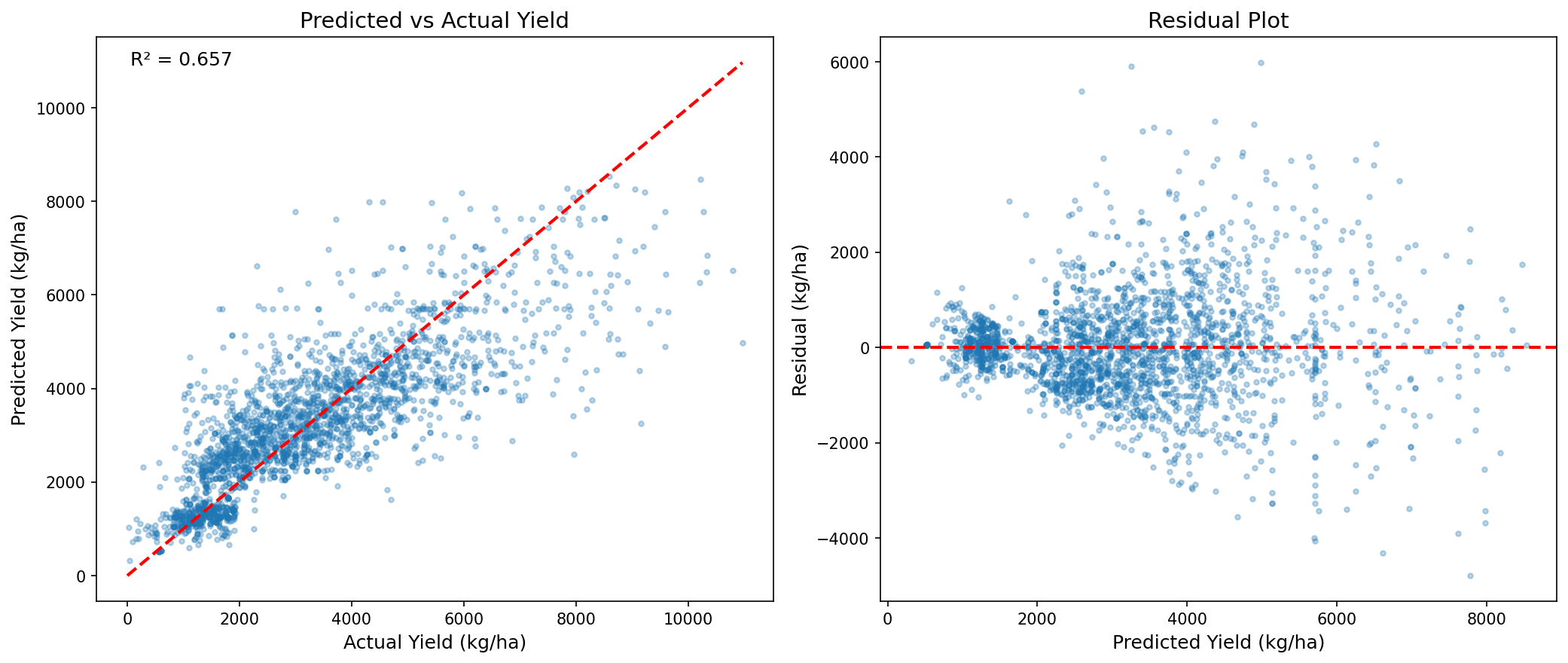

This chart shows whether the model tracks real maize yield behaviour instead of simply memorizing averages. A tighter relationship means stronger predictive value.

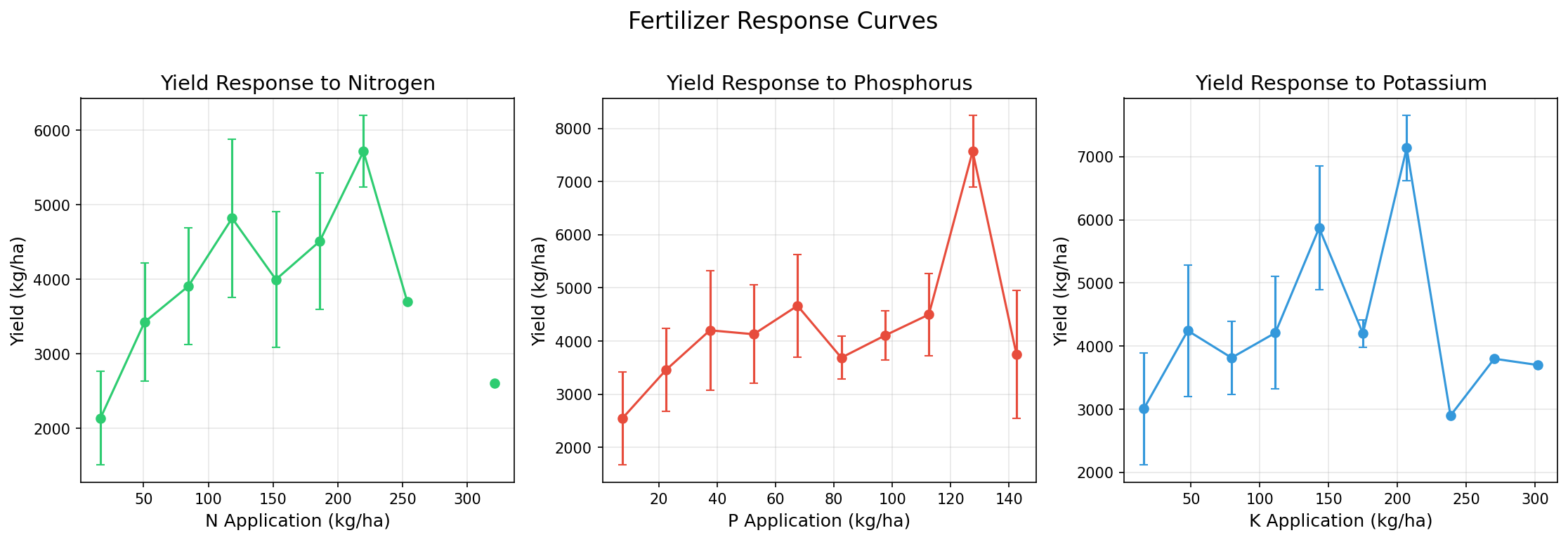

These response curves help explain how the model expects yield to change as nutrient inputs vary. This is important for making recommendations interpretable.

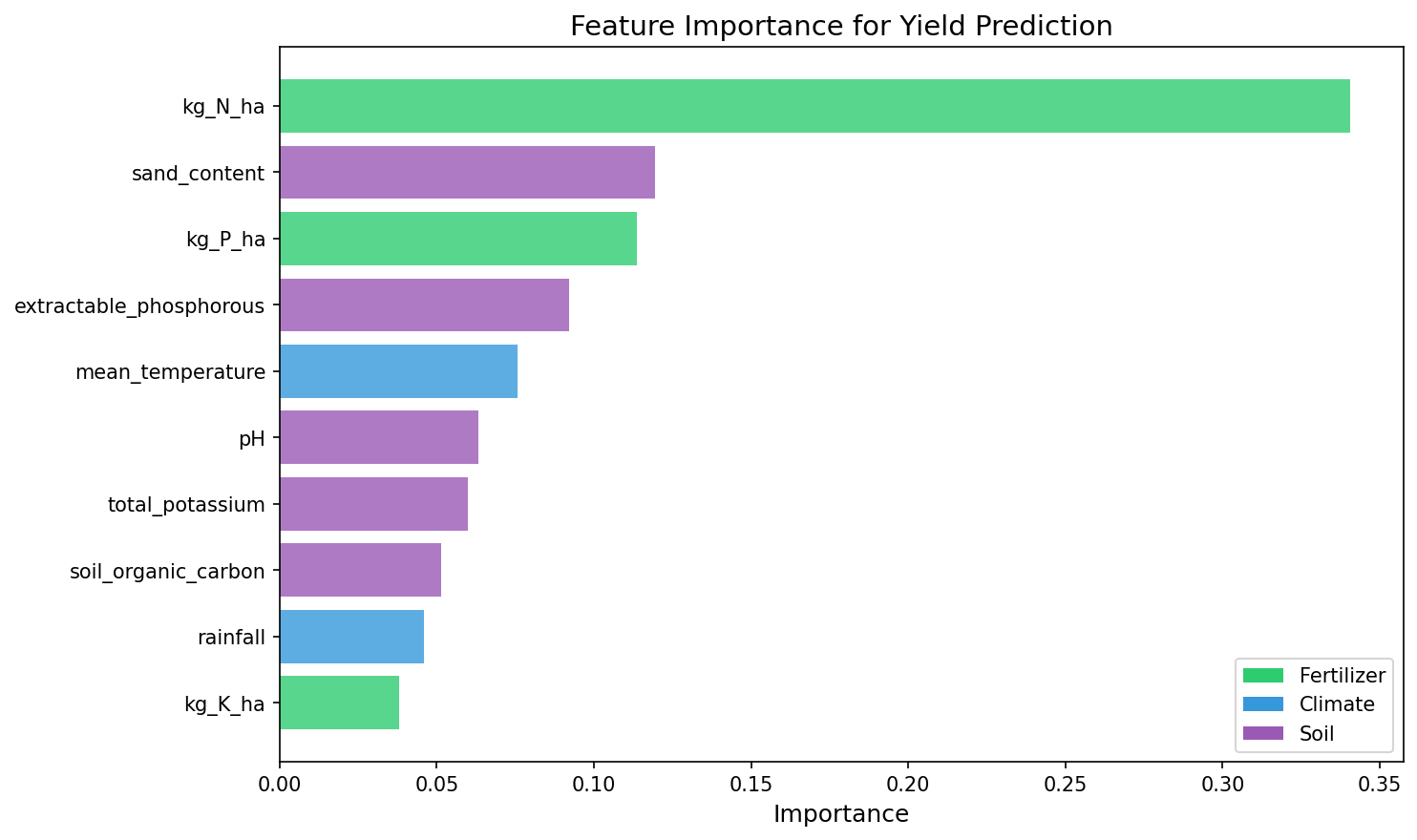

Feature importance shows which inputs most influenced prediction behaviour. This helps expose whether the model is relying on agronomically meaningful variables.

Global Scale

A key insight: we use regression (continuous outputs) instead of classification (labels) because plant biology and soil chemistry follow universal laws that don't change at borders.

Classification approach: "Kenya soil type A → Use DAP" cannot generalize to Somalia (no "Somalia" class). Regression approach: f(N, P, K, rainfall, pH, temp) → yield works everywhere because the model learned universal agronomic relationships, not geographic labels. A maize plant in Somalia responds to nitrogen the same way as a maize plant in Kenya — the underlying biology doesn't change.

We validated generalization by grouping data by climate/soil environment and testing the model on held-out environments. Even when predicting for "unseen regions," the model maintains predictive power — demonstrating that universal agronomic relationships transfer.

Transparency

Unlike opaque AI systems, FildraAI provides structured traces for every prediction: what the model predicts, why it made that prediction, and where to be cautious.

Validation Summary

Every dataset we use passes rigorous statistical validation. Here's the complete comparison showing why we use some datasets and reject others.

| Metric | Kaggle Fertilizer | Harvard Yield | Crop Recommendation |

|---|---|---|---|

| Samples | 100,000 | 12,081 | 2,200 |

| Mutual Information (max) | 0.004 | 1.11 | 0.89 |

| Correlation (max |r|) | 0.005 | 0.52 | 0.45 |

| Independence test | p = 0.66 | p < 0.001 | p < 0.001 |

| Model performance | 14% = random | R² = 0.68 | 99.5% acc |

| Verdict | REJECTED | PRODUCTION | PRODUCTION |

Boundaries

Being transparent about limitations is as important as showcasing capabilities. Here's what our models don't do — and when to rely on local expertise instead.

These models are decision-support tools, not replacements for agronomic expertise. Predictions should be validated against local conditions, farmer experience, and extension officer guidance. We recommend starting with small trial plots before scaling recommendations across entire farms.

All our ML research is open and reproducible. View the signal analysis, model training, and validation code in Google Colab. We believe transparency builds trust.